Introduction

In data center server cabinets, it’s common to find numerous solid-state drives (SSDs) that play a vital role in data storage. The controller chip acts as the brain of the SSD, efficiently managing data flow in and out of storage units.

Storage is one of the key infrastructures in the era of large models. With data becoming the core resource in AI, storage technology determines the efficiency of data processing for large models, influencing both training and inference speeds. As the scale of training datasets grows exponentially, balancing storage costs and performance becomes crucial.

“Storage is a definite large market,” said CFO of storage chip design company InnoGrit, Zhong Xiaohui. She noted that computing power, storage capacity, and transmission capabilities often promote and develop symbiotically. The current surge in large models is driving advancements in storage technology, raising the bar for differentiated competition and technological iteration among storage chip companies and SSD manufacturers.

Importance of Storage and Computing Power

The main hardware components of an SSD include NAND flash memory chips, DRAM cache, and the controller chip. If we liken the data that needs to be stored to cars, then the SSD is a giant parking lot, with storage units on the flash chips acting as parking spaces and the controller chip serving as the “manager” directing each car to enter and exit its parking space accurately and quickly.

The controller chip is essentially the brain of the SSD, executing complex operations such as data reading, writing, and encryption through corresponding firmware code. InnoGrit is a developer of these core storage components, offering solutions for SSDs and their internal storage controller chips.

Globally, the enterprise SSD market has long been dominated by South Korea’s Samsung Electronics and SK Hynix, which together hold over 70% market share. The domestic enterprise SSD industry is still in a rapid catch-up phase. When InnoGrit was founded in 2017, mainstream storage technology was shifting from mechanical hard drives to SSDs, and data transmission interfaces were transitioning from SATA to the faster PCIe. This shift provided opportunities for domestic startups.

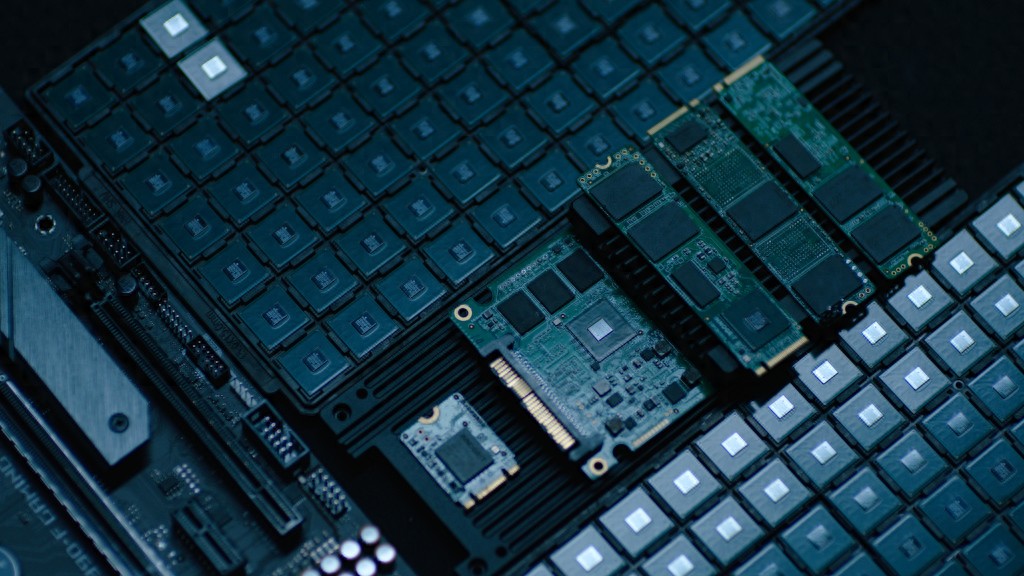

Reference image of InnoGrit’s SSD controller chips and SSD modules used in PCs and servers.

Reference image of InnoGrit’s SSD controller chips and SSD modules used in PCs and servers.

“From 2017 to 2021, domestic manufacturers focused on converting technology into products. From 2021 to 2024, after solving product issues, the challenge has shifted to finding customers,” Zhong Xiaohui stated. The push for domestic alternatives has objectively advanced the development of China’s storage chip industry, while the AI boom serves as an even greater catalyst.

At the “Wukong Intelligent Computing” 6876P computing center in Lianyungang, Jiangsu, servers are lined up performing 68.7 quintillion floating-point operations per second. After optimizing hardware and software for the DeepSeek full parameter version, Wukong achieved an ultra-high throughput of over 6900 tokens per second, enabling enterprises to quickly launch AI applications in just three minutes.

As data volumes increase, the importance of both computing power and storage capacity rises. Cold data is becoming less common, with more data transitioning to warm and even hot states. In the past, data in financial systems or traditional data centers would be stored and unused after five years, but now, once models are operational, they require real-time data throughput, transforming previously cold and warm data into hot data.

Jiangsu Zhonghuan Cloud Control IoT Technology Co., Ltd. is leveraging Wukong to develop a sanitation model, exploring intelligent applications. Sanitation workers equipped with smart wristbands can transmit vital signs, location, and task progress in real time, allowing the system to automatically adjust work routes. Autonomous cleaning vehicles and drones share real-time data on road conditions and garbage distribution, refreshing operational strategies at a second-level frequency. Through virtual-physical mapping, coordinated scheduling, and autonomous collaboration, traditional sanitation operations are evolving into new intelligent models. “In the past, we referred to smart sanitation as information management; now we call it embodied intelligent agents. The difference is that the system is no longer just a brain processing data but enables every device, worker, and operational link to become a thinking, communicative, and self-evolving digital entity,” said Xu Lei, Executive Director of Zhonghuan Cloud Control.

Meanwhile, applications like DeepSeek have opened doors for inference and edge computing. Lightweight model design, hardware adaptation optimization, and reduced model deployment costs have shifted computing power demand from the training side to the inference side, concentrating training tasks in the cloud while pushing inference tasks down to edge devices. As massive data becomes more active, the demand for computing power evolves, and the pursuit of low latency in inference experiences intensifies, placing higher demands on storage capacity.

Storage Technology Upgrades Driven by Large Models

In the surface polishing industry, excellent craftsmanship represents an insurmountable technical barrier, while AI’s value lies in the continuous accumulation of process data to develop smarter robotic brains that further optimize craftsmanship.

Founded in 2018, Sophis Intelligent Technology (Shanghai) Co., Ltd. transitioned from robot agency to self-developed products, focusing on applications in manufacturing such as polishing, cutting, drilling, and deburring. Founder Du Ling stated that AI integration is essential for making robots smarter. The team developed an intelligent polishing machine that can display data on smartphones and computers, ensuring that employees are kept away from dust and noise while recording key process data and parameters such as pressure, temperature, speed, and materials left by experienced workers during polishing. The goal is to develop a polishing model to meet diverse product needs and enhance craftsmanship.

This highlights the urgent need for both computing power and storage. According to InnoGrit, the collection of raw data and inference logs generates a substantial amount of data, necessitating massive write and high-speed read capabilities for storage. Data cleaning and model training require high-concurrency mixed read and write operations, with a greater emphasis on random performance. Different data application scenarios have begun to show differentiated requirements for storage chips.

Traditional data centers typically require SSDs with capacities of 4TB to 8TB, but with the emergence of DeepSeek, flash memory capacity demands have risen to 32TB, 64TB, or even 128TB. As flash memory chip capacity increases, the development difficulty also escalates. This is akin to building a taller building, which requires higher structural integrity. InnoGrit’s products have already spawned various niche applications, raising the bar for storage chip companies and SSD manufacturers in terms of differentiated competition and technological iteration capabilities.

In fact, AI is driving the evolution of storage technology. “In the past, many domestic data centers were still using mechanical hard drives. Two years ago, they began switching to SSDs due to speed requirements, transitioning from SATA to PCIe 4.0, and now we are entering the PCIe 5.0 era,” Zhong Xiaohui explained. After the launch of ChatGPT in 2022, the application market represented by AIGC began to demand higher performance and capacity from storage. The emergence of DeepSeek has facilitated the application of large model inference, and the new generation of PCIe 6.0 SSDs and storage-class memory solutions based on CXL interfaces are gaining attention. These technologies will support large model data center cloud services and local deployment all-in-one machines in new ways, accelerating the implementation of open-source large models like DeepSeek. “The emergence of AI has accelerated the market introduction of SSDs; it took us about a year to introduce them to standard server manufacturers, and in the first half of 2024, shipments are expected to increase tenfold.”

Computing power, storage capacity, and transmission capabilities often promote and develop symbiotically. Domestic AI chip companies are exploring layouts from edge servers to cloud servers using more open architectures like RISC-V. Meng Jianyi, CEO of Zhihe Computing, noted that breakthroughs in high-performance computing with RISC-V require not only entering the high-performance realm at the general computing level but also integrating AI-enhanced computing at the architectural level to achieve AI-native capabilities.

“Storage has always followed the developments in computing power and transmission capabilities. Whenever one end rises, you must keep up,” Zhong Xiaohui asserted. To match differentiated storage solutions to various application scenarios and support computing power demands, the team’s focus this year is on developing storage controller chips and solutions that meet future AI needs. “There are still many manufacturers in the global storage controller market. To carve out a niche and establish a stable presence, we must excel in the iterative upgrade process and offer unique solutions. In the future, domestic manufacturers must not only focus on meeting domestic replacement needs and sustainable product iteration capabilities but also emphasize the ability to expand internationally. This will be a necessary phase for domestic storage companies over the next 3-5 years, or even 5-10 years.”

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.